Enterprise AI initiatives often begin with excitement and end with disappointment. AI fails not because models are weak — it fails because the application environment is fragmented.

Every board wants it.

Every executive is exploring it.

Every vendor claims to enable it.

Yet most AI initiatives in enterprises stall after pilots or give results far lower than expected.

Not because the models lack capability, but because the enterprise was never designed to host intelligence.

AI is not a feature. It is an architectural event. And without a holistic application framework, it will never scale beyond experimentation.

The Illusion of “Layered AI”

Many organizations attempt to “add AI” on top of existing systems:

- A chatbot on top of a CRM

- Document extraction layered over PDFs

- Analytics dashboards enhanced with LLM summaries

This creates the appearance of progress.

But underneath, the same issues remain: fragmented data, rigid legacy systems, manual, human-only workflows, siloed access controls, no operational intelligence layer.

The result is predictable: AI generates insights. Humans still do the work and the transformation never happens.

Core structural barriers consistently undermine AI adoption.

1. Fragmented Data Ecosystems

From the lens of private market funds, in most funds, data is spread across legacy applications / spreadsheets, managed by third-party administrators and buried in unstructured documents

AI cannot reason over fragmented truth. Intelligence requires a unified, normalized, continuously updated data foundation. Without it, AI is guessing.

2. Legacy Application Landscapes Built for Transactions — Not Intelligence

Most enterprise systems were designed for recording transactions, enforcing controls and processing workflows linearly. They were not designed for contextual reasoning, cross-domain analysis, autonomous execution or continuous monitoring

AI needs architectural fluidity. If the system cannot expose clean APIs, event streams, or structured context, the AI layer becomes ornamental — not operational.

True AI transformation requires workflow redesign — not automation of inefficiency.

CapHive’s Holistic Framework for Enterprise AI

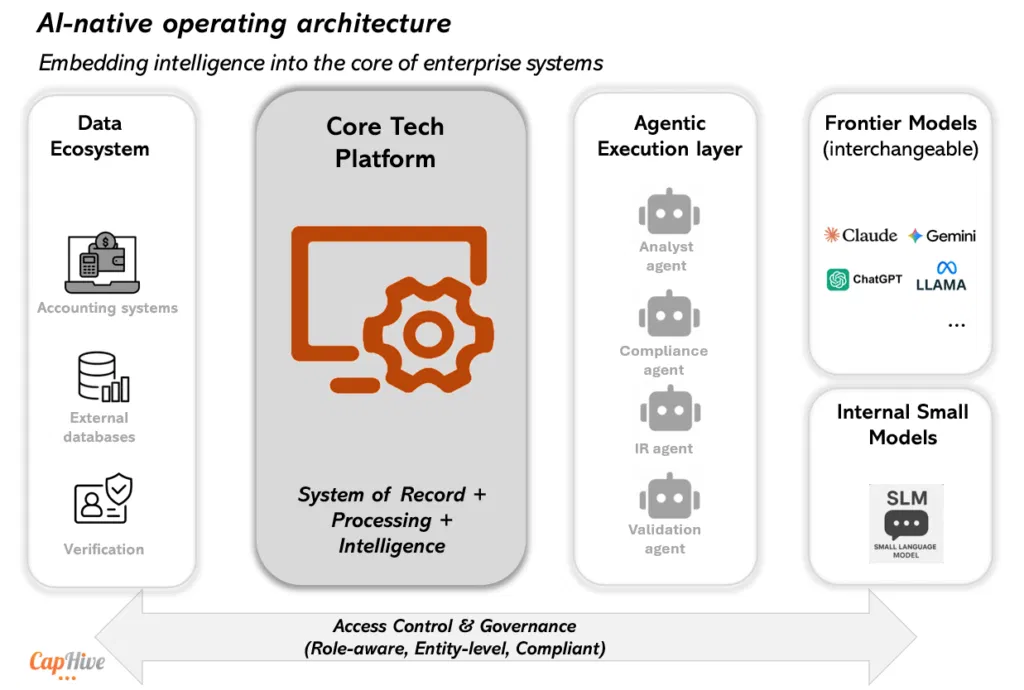

At CapHive, we believe AI success requires transformation across multiple architectural layers — not just model adoption. Intelligence must be embedded into the core of the system.

A modern data platform built to truly leverage must absorb the existing ecosystems.

Enterprises may not rip and replace overnight. Hence, the platform must integrate with:

- Legacy systems

- External administrators

- Third-party vendors

- Regulatory reporting frameworks

It must normalize and reconcile data across fragmented sources to be able to give

AI a single, reliable source of contextual truth. To enable this seamlessly for private capital markets, we built CapHive with the following principles:

1. Operate as a Full-Stack Platform

When the operational core — fund administration, portfolio tracking, investor management, compliance workflows — lives within the same intelligent system, AI becomes embedded in

2. Handle Structured and Unstructured Data Natively

The real intelligence of modern AI for a fund lies in understanding a multitude of documents such as Investment memos, legal agreements, board decks, financial statements and regulatory filing

CapHive address this with a modern platform that in addition to storing a range of unstructured data securely, extract structured signals, link documents to entities and workflows and preserve auditability.

When structured and unstructured data converge, AI reasoning becomes powerful.

3. Access Controls as an Intelligence Boundary

In regulated industries, intelligence without governance is a liability.

CapHive’s comprehensive approach AI ensures that role-based access controls with user level controls, investor confidentiality constraints and regulatory compliance requirements, including data localization.

This system ensures the right user sees the right insight, AI actions respect permissions and Audit trails capture every automated decision. Trust is the currency of enterprise AI. Without embedded governance, scale is impossible.

4. An Agentic Execution Layer

The next evolution of enterprise AI is not a chat interface. It is agentic execution.

An agentic framework enables AI to retrieve contextual data, perform financial computations, validate documents, trigger workflows, monitor compliance continuously, escalate exceptions intelligently.

This transforms AI from assistant to operator — within defined boundaries.

Without an agentic layer, AI remains advisory. With it, AI becomes operational infrastructure.

5. A Flexible Core AI Layer with Model Fungibility

The model ecosystem evolves at extraordinary speed and enterprise architecture must assume continuous evolution. The capabilities of LLMs has been rising exponentially, with different models offering newer enhanced use cases in rapid succession which has never been witnessed before. Though with their rapid rise there are also open questions on sustainability and long-term pricing. An Enterprise cannot be locked into a single AI provider – to be able to use the best of feature and be able to switch seamlessly at will.

CapHive’s robust AI core enables:

- Integration with leading global LLMs

- Enable customers to ‘Bring your own Key’ for greater control

- Switching models based on task complexity

- Optimizing for cost vs reasoning depth

- Leveraging frontier capabilities as they emerge

Over the next few months, we will also enhance the bouquet of choices with in-house locally deployed Small Language Models (SLMs) because not every task needs the superpower of an LLM.

Optionality is strategic advantage. The future is hybrid intelligence — frontier reasoning combined with internal specialization.

AI Is an Architectural Decision, Not a Feature Roadmap

Enterprises that treat AI as a feature will remain in pilot mode.

Enterprises that treat AI as architecture will reshape how work is done.

The shift is profound:

- From static workflows to adaptive, agentic processes.

- From systems of record to systems of intelligence.

- From manual validation to automated assurance.

- From siloed data to unified reasoning layers.

The organizations that win in the next decade will not be those who adopt AI fastest. They will be those who redesign their application frameworks to host intelligence natively.

What This means for Private Market Funds

Few industries are as structurally primed for AI-native transformation as private capital.

The ecosystem sits at the intersection of:

- Complex fund structures

- Bespoke requirements of every fund vehicle

- Increasing regulatory scrutiny

- Expanding investor bases

- Fragmented portfolio company data

- Document-heavy operations

- Manual compliance and reporting processes

General Partners are managing more vehicles, more co-investments, more SPVs, more reporting obligations — often without a proportional increase in operational infrastructure.

At the same time, the information density within private capital is extraordinarily high: capital call notices, distribution waterfalls, LP communications, portfolio company MIS data, deal memos, regulatory filings

This is not a data-poor industry. It is a data-fragmented one. The firms that unify their operational core — across fund administration, portfolio monitoring, compliance, and investor reporting — create the conditions for AI to operate natively.

In such an environment, AI can be leveraged to help:

- Continuously monitor fund compliance

- Detect anomalies across portfolio metrics

- Validate capital movements

- Assist in valuation reasoning

- Prepare investor reports automatically

- Surface risk before it becomes regulatory exposure

- Using multiple data points in relation to the fund and portfolio investments for bespoke on demand analysis

- Customised period or event-based updates to GPs

Over time this will also enabling a range of additional use cases, including more complex analytics, virtual employees (i.e AI bots) that operate on cost controlled and on demand basis, real-time visibility and analytics for LPs, tokenised fund units that operate in the boundaries agreed between the GPs and LPs,

As complexity increases and regulatory expectations tighten, firms that adopt AI-native platforms will not merely reduce costs — they will operate with greater clarity, speed, and control. In a market defined by trust, governance, and performance, intelligence becomes a structural advantage.